Does AI actually help students learn? A recent experiment in a high school provides a cautionary tale.

Researchers at the University of Pennsylvania found that Turkish high school students who had access to ChatGPT while doing practice math problems did worse on a math test compared with students who didn’t have access to ChatGPT. Those with ChatGPT solved 48 percent more of the practice problems correctly, but they ultimately scored 17 percent worse on a test of the topic that the students were learning.

A third group of students had access to a revised version of ChatGPT that functioned more like a tutor. This chatbot was programmed to provide hints without directly divulging the answer. The students who used it did spectacularly better on the practice problems, solving 127 percent more of them correctly compared with students who did their practice work without any high-tech aids. But on a test afterwards, these AI-tutored students did no better. Students who just did their practice problems the old fashioned way — on their own — matched their test scores.

Because AI and previously google searches are not a substitute for having knowledge and experience. You can learn by googling something and reading about how something works so you can figure out answers for yourself. But googling for answers will not teach you much. Even if it solves a problem, you won’t learn how. And won’t be able to fix something in the future without googling th answer again.

If you dont learn how to do something, you won’t be experienced enough to know when you are doing it wrong.

I use google to give me answers all the time when im problem solving. But i have to spend a lot more time after the fact to learn why what i did fixed the problem.

Nope, this doesn’t work like this. sometimes you need someone to explain, specially on math, youtube can take that spot, but not always.

That’s what i am saying. You need to learn it. If someone explains it to you, then you are learning. If someone gives you the answer, then you dont understand it, so you are less good at said something.

You agree with me…

Kids using an AI system trained on edgelord Reddit posts aren’t doing well on tests?

Ya don’t say.

Yea, this highlights a fundamental tension I think: sometimes, perhaps oftentimes, the point of doing something is the doing itself, not the result.

Tech is hyper focused on removing the “doing” and reproducing the result. Now that it’s trying to put itself into the “thinking” part of human work, this tension is making itself unavoidable.

I think we can all take it as a given that we don’t want to hand total control to machines, simply because of accountability issues. Which means we want a human “in the loop” to ensure things stay sensible. But the ability of that human to keep things sensible requires skills, experience and insight. And all of the focus our education system now has on grades and certificates has lead us astray into thinking that the practice and experience doesn’t mean that much. In a way the labour market and employers are relevant here in their insistence on experience (to the point of absurdity sometimes).

Bottom line is that we humans are doing machines, and we learn through practice and experience, in ways I suspect much closer to building intuitions. Being stuck on a problem, being confused and getting things wrong are all part of this experience. Making it easier to get the right answer is not making education better. LLMs likely have no good role to play in education and I wouldn’t be surprised if banning them outright in what may become a harshly fought battle isn’t too far away.

All that being said, I also think LLMs raise questions about what it is we’re doing with our education and tests and whether the simple response to their existence is to conclude that anything an LLM can easily do well isn’t worth assessing. Of course, as I’ve said above, that’s likely manifestly rubbish … building up an intelligent and capable human likely requires getting them to do things an LLM could easily do. But the question still stands I think about whether we need to also find a way to focus more on the less mechanical parts of human intelligence and education.

LLMs likely have no good role to play in education and I wouldn’t be surprised if banning them outright in what may become a harshly fought battle isn’t too far away.

While I agree that LLMs have no place in education, you’re not going to be able to do more than just ban them in class unfortunately. Students will be able to use them at home, and the alleged “LLM detection” applications are no better than throwing a dart at the wall. You may catch a couple students, but you’re going to falsely accuse many more. The only surefire way to catch them is them being stupid and not bothering to edit what they turn in.

Would kids do better if the AI doesn’t hallucinate?

Paradoxically, they would probably do better if the AI hallucinated more. When you realize your tutor is capable of making mistakes, you can’t just blindly follow their process; you have to analyze and verify their work, which forces a more complete understanding of the concept, and some insight into what errors can occur and how they might affect outcomes.

Would snails be happier if it kept raining? What can we do to make it rain forever and all time?

no shit

“tests designed for use by people who don’t use chatgpt is performed by people who don’t”

This is the same fn calculator argument we had 20 years ago.

A tool is a tool. It will come in handy, but if it will be there in life, then it’s a dumb test

The main goal of learning is learning how to learn, or learning how to figure new things out. If “a tool can do it better, so there is no point in not allowing it” was the metric, we would be doing a disservice because no one would understand why things work the way they do, and thus be less equipped to further our knowledge.

This is why I think common core, at least for math, is such a good thing because it teaches you methods that help you intuitively figure out how to get to the answer, rather than some mindless set of steps that gets you to the answer.

The point of learning isn’t just access to that information later. That basic understanding gets built on all the way up through the end of your education, and is the base to all sorts of real world application.

There’s no overlap at all between people who can’t pass a test without an LLM and people who understand the material.

This is ridiculous. The world doesn’t have to bend the knee to LLMs, they’re supposed to be useful tools to solve problems.

And I don’t see why asking them to help with math problems would be unreasonable.

And even if the formulation of the test was not done the right way, your argument is still invalid. LLMs have been used as an aid. The test wasn’t given to the LLM directly. But students failed to use the tool to their advantage.

This is yet another hint that the grift doesn’t actually serve people.

Another thing these bullshit machines can’t do! The list is getting pretty long.

About the calculator argument… Well, the calculator is still used in class, because it makes sense in certain contexts. But nobody ever sold calculators saying they would teach you math and would be a do-everything machine.

Also actual mathematicians are pretty much universally capable of doing many calculations to reasonable precision in their head, because internalizing the relationships between numbers and various mathematical constructs is necessary to be able to reason about them and use them in more than trivial ways.

Tests for recall aren’t because the specific piece of information is the point. They’re because being able to retrieve the information is essential to integrate it into scenarios where you can utilize it, just like being able to do math without a calculator is needed to actually apply math in ways that aren’t prescribed for you.

proscribed

err… I’m finding it hard to understand the meaning of the sentence using the dictionary meaning of this word. Did you mean to use some other word?

I’d love to tell you how the hell I got there. My brain exploded I guess. I meant prescribed, in the sense that you’re following the exact script someone laid out before you.

I had a physics class in college where we spent each section working through problems to demonstrate the concepts. You were allowed a page “cheat sheet” to use on the exams, and the exams were pretty much the same problems with the numbers changed. Lots of people got As in that class. Not many learned basic physics.

A lot of people don’t get further than that in math, because they don’t understand the basic building blocks. Plugging numbers into a formula isn’t worthless, and a calculator helps that. But it doesn’t help you once the problem changes a little instead of just the inputs.

You were allowed a page “cheat sheet” to use on the exams, and the exams were pretty much the same problems with the numbers changed.

That seems like the worst way of making an exam.

In case the cheat sheet were not there, it would at least be testing something (i.e. how many formulae you memorised), albeit useless.When you let students have a cheat sheet, it is supposed to be obvious that this will be a HOTS (higher order thinking skills) test. Well, maybe for teachers lacking said HOTS, it was not obvious.

Yeah, I’m all for “you don’t have to memorize every formula”, but I would have just provided a generic formula sheet and made people at least get from there to the solutions, even if you did the same basic problems.

It’s hard for me to objectively comment on the difficulty of the material because I’d already had most of the material in high school physics and it was pretty much just basic algebra to get from any of the formulas provided to the solution, but the people following the sheets took the full hour to do the exams that took me 5 minutes without the silly cheat sheet, because they didn’t learn anything in the class.

Fun to see how this thread stemmed from “no shit”.

I mean you’re right, but also, anybody who is an actual mathematician has no idea how to add 6+17, mostly only being concerned with “why” is 6+17, and the answer is something along the lines of bijective function space.

Source: what did I do to deserve this

I didn’t mean it that way, I really just meant the discussion is idiotic

As someone who has taught math to students in a classroom, unless you have at least a basic understanding of HOW the numbers are supposed to work, the tool - a calculator - is useless. While getting the correct answer is important, I was more concerned with HOW you got that answer. Because if you know how you got that answer, then your ability to get the correct answer skyrockets.

Because doing it your way leads to blindly relying on AI and believing those answers are always right. Because it’s just a tool right?

No where did I say a kid shouldn’t learn how to do it. I said it’s a tool, I’m saying it’s a dumb argument/discussion.

If I said, students who only ever used a calculator didn’t do as well on a test where calculators werent allowed, you would say " yeah no shit"

This is just an anti technology, anti new generation separation piece that divides people and will ultimately create a rifts that help us ignore real problems.

Shocked, I tell you!

Maybe, if the system taught more of HOW to think and not WHAT. Basically more critical thinking/deduction.

This same kinda topic came up back when I was in middle/highschool when search engines became wide spread.

However, LLM’s shouldn’t be trusted for factual anything, same as Joe blows blog on some random subject. Did they forget to teach cross referencing too? I’m sounding too bitter and old so I’ll stop.

However, LLM’s shouldn’t be trusted for factual anything, same as Joe blows blog on some random subject.

Podcasts are 100% reliable tho

See also: competitive cognitive artifacts. https://philosophicaldisquisitions.blogspot.com/2016/09/competitive-cognitive-artifacts-and.html

These are artifacts that amplify and improve our abilities to perform cognitive tasks when we have use of the artifact but when we take away the artifact we are no better (and possibly worse) at performing the cognitive task than we were before.

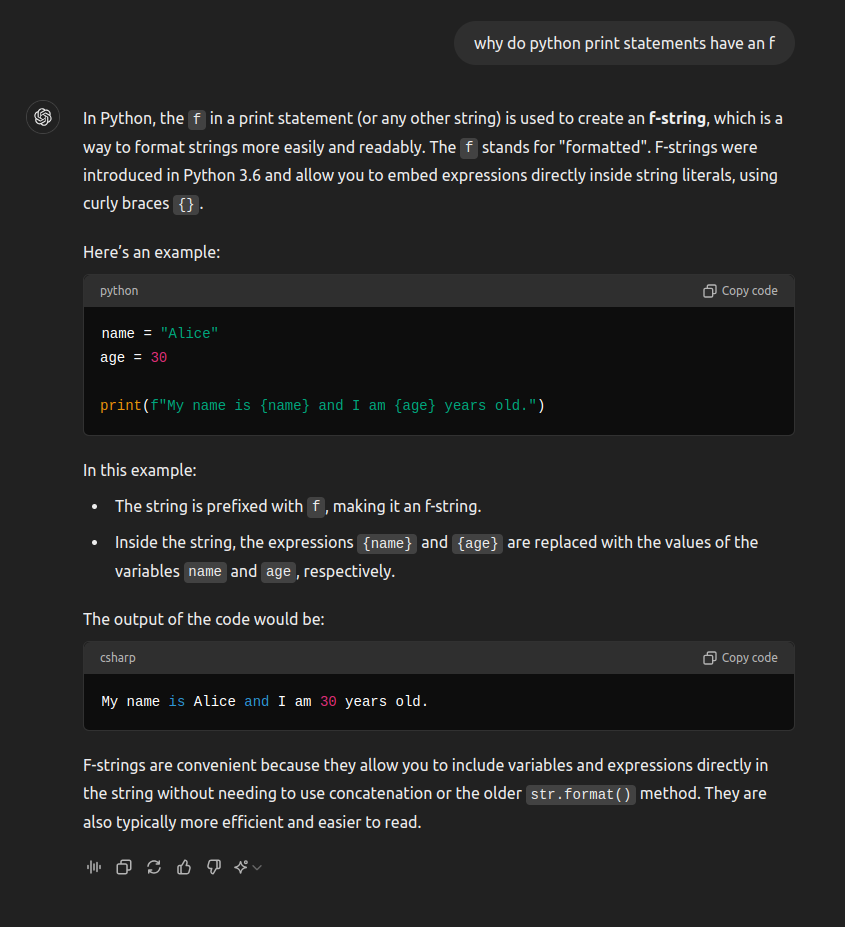

At work we give a 16/17 year old, work experience over the summer. He was using chatgpt and not understanding the code that was outputing.

I his last week he asked why he doing print statement something like

print (f"message {thing} ")Sounds like operator error because he could have asked chatGPT and gotten the correct answer about python f strings…

It all depends on how and what you ask it, plus an element of randomness. Remember that it’s essentially a massive text predictor. The same question asked in different ways can lead it into predicting text based on different conversations it trained on. There’s a ton of people talking about python, some know it well, others not as well. And the LLM can end up giving some kind of hybrid of multiple other answers.

It doesn’t understand anything, it’s just built a massive network of correlations such that if you type “Python”, it will “want” to “talk” about scripting or snakes (just tried it, it preferred the scripting language, even when I said “snake”, it asked me if I wanted help implementing the snake game in Python 😂).

So it is very possible for it to give accurate responses sometimes and wildly different responses in other times. Like with the African countries that start with “K” question, I’ve seen reasonable responses and meme ones. It’s even said there are none while also acknowledging Kenya in the same response.

Students first need to learn to:

- Break down the line of code, then

- Ask the right questions

The student in question probably didn’t develop the mental faculties required to think, “Hmm… what the ‘f’?”

A similar thingy happened to me having to teach a BTech grad with 2 years of prior exp. At first, I found it hard to believe how someone couldn’t ask such questions from themselves, by themselves. I am repeatedly dumbfounded at how someone manages to be so ignorant of something they are typing and recently realising (after interaction with multiple such people) that this is actually the norm[1].

and that I am the weirdo for trying hard and visualising the C++ abstract machine in my mind ↩︎

No. Printing statements, using console inputs and building little games like tic tac toe and crosswords isn’t the right way to learn Computer Science. It is the way things are currently done, but you learn much more through open source code and trying to build useful things yourself. I would never go back to doing those little chores to get a grade.

I would never go back to doing those little chores to get a grade.

So either you have finished obtaining all the academic certifications that require said chores, or you are going to fail at getting a grade.

Im afraid to ask, but whats wrong with that line? In the right context thats fine to do no?

I’ve found AI helpful in asking for it to explain stuff. Why is the problem solved like this, why did you use this and not that, could you put it in simpler terms and so on. Much like you might ask a teacher.

I think this works great if the student is interested in the subject, but if you’re just trying to work through a bunch of problems so you can stop working through a bunch of problems, it ain’t gonna help you.

I have personally learned so much from LLMs (although you can’t really take anything at face value and have to look things up independently, but it gives you a great starting place), but it comes from a genuine interest in the questions I’m asking and things I dig at.

I have personally learned so much from LLMs

No offense but that’s what the article is also highlighting, naming that students, even the good, believe they did learn. Once it’s time to pass a test designed to evaluate if they actually did, it’s not that positive.

To an extent, but it’s often just wrong about stuff.

It’s been a good second step for things I have questions about that I can’t immediately find good search results for. I don’t wanna get off topic but I have major beef with Stack Overflow and posting questions there makes me anxious as hell because I’ll do so much diligence to make sure it is clear, reproducible, and not a duplicate only for my questions to still get closed. It’s a major fucking waste of my time. Why put all that effort in when it’s still going to get closed?? Anyways – ChatGPT never gets mad at me. Sure, it’s often wrong as hell but it never berates me or makes me feel stupid for asking a question. It generally gets me close enough on topics that I can search for other terms in search engines and get different results that are more helpful.

Yep. My first interaction with GPT pro lasted 36 hours and I nearly changed my religion.

AI is the best thing to come to learning, ever. If you are a curious person, this is bigger than Gutenberg, IMO.

That sounds like a manic episode

I mean, is it really that surprising? You’re not analyzing anything, an algorithm just spits text at you. You’re not gonna learn much from that.

You could always try reading the article

Which, in a fun bit of meta, is a decent description of artificial “intelligence” too.

Maybe the real ChatGPT was the children we tested along the way

In the study they said they used a modified version that acted as a tutor, that refused to give direct answers and gave hints to the solution instead.

So it’s still not surprising since ChatGPT doesn’t give you factual information. It just gives you what it statistically thinks you want to read.

That’s like cheating with extra steps.

Ain’t getting hints on your in class exam.

The title is pretty misleading. Kids who used ChatGPT to get hints/explanations rather than outright getting the answers did as well as those who had no access to ChatGPT. They probably had a much easier time studying/understanding with it so it’s a win for LLMs as a teaching tool imo.

Is it really a win for LLMs if the study found no significant difference between those using it as a tutor and those not?

As another poster questioned, if it saved them tine then, yes, it is absolutely a win. But if they spent the same amount of time, I would agree with you that it’s not a win.

Ok fair enough, I see how that would be a win.

Maybe using llm assistance was less stressful or quicker than self study. The tutoring focused llm is definitely better than allowing full access to gpt itself, which is what is currently happening

Not everyone can afford a tutor or knows where to find an expert that can answer questions in any given domain. I think such a tool would have made understanding a lot of my college courses a lot easier.

Unsurprised

I.would have no problem with AI if the shit actually worked

No, I think the point here is that the kids never learned the material, not that AI taught them the wrong material (though there is a high possibility of that).

Yes yet there is indeed a deeper point. If the AI is to be used as a teaching tool it still has to give genuinely useful advice. No good sounding advice that might actually still be wrong. LLMs can feed wrong final answers but they can also make poor suggestions on the process itself too. So there are both problematic, how the tool is used but also its intrinsic limitations.

This isn’t a new issue. Wolfram alpha has been around for 15 years and can easily handle high school level math problems.

Except wolfram alpha is able to correctly explain step by step solutions. Which was an aid in my education.

Only old farts still use Wolfram

What do young idiots use?

ChatGPT apparently lol

I can’t remember, but my dad said before he retired he would just pirate Wolfram because he was too old to bother learning whatever they were using. He spent 25 years in academia teaching graduate chem-e before moving to the private sector. He very briefly worked with one of the Wolfram founders at UIUC.

Where did you think you were?

Did those using tutor AI spend less time on learning? That would have been worth measuring

Interesting thought, I would be curious about this too.