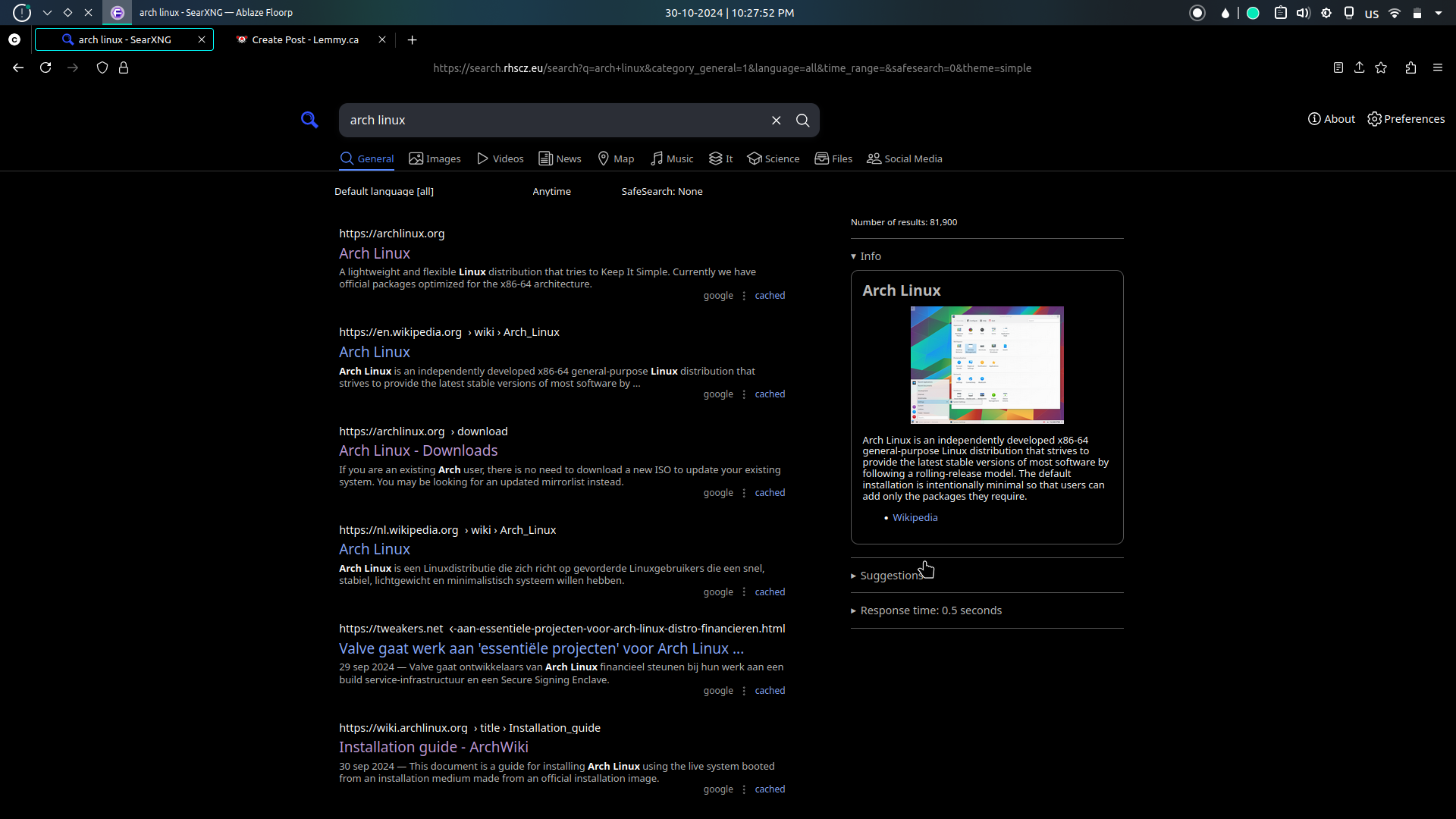

actually awesome and fast search engine (depending on which instance you use) with no trashy AI and ADs results also great for privacy, if you don’t know which instance to use go to https://searx.space/ and choose an instance closest to you

Dig out old PC from somewhere, install some Linux distribution, Tailscale and Docker/Podman, and install SearX that way.

What if you want to use the computer for something other than Google searching?

Install the other stuff on it

I stopped using it not because of the results but because you couldn’t swipe back without it sending you to the base website.

On DuckDuckGo (and google n others) a search is shown in the URL like looking for frog:

https://duckduckgo.com/?q=frog&t=fpas&ia=webHowever in SearXNG it just shows

https://searxng.world/search

Which I don’t have an issue with, however when you click on a link and then go back to the search results it would have no idea what you searched for as it’s not in the URL and show an error.

That aside, the UI is great. icons don’t swap around on you like Google or have annoying popups about ‘privacy’ like DDG. On the topic of search results, it was good enough for me. Not great but then again there aren’t any good search engines right now.

I checked it out, but most of the public instances I looked at use google + bing. I think I only came across one that used Mojeek, but they deranked it so google results were still at the top.

Yeah you can customize them – if you never clear your cookies.

Pretty much need to self-host it to customize it.

if you never clear your cookies.

They allow you to use a link instead for saving settings, which can also be used to set as your default search engine

I’m not seeing that option anywhere. Nor does it allow me to change the “weight”. I found a github discussion saying it should show up after you save the settings, but I tried that on two instances and didn’t get any unique URL.

Ah, I found it under the “cookies” tab. Needs to be more obvious IMO.

Did anyone else not know Lycos was still around? Lycos is still around. Seems not that bad. No AI stuff.

Aren’t all search queries available to whoever hosts an instance? In my eyes this is much worse to privacy and a much bigger risk unless you really know who is behind your chosen instance. I would trust some a company a bit more with safeguarding this information so it does not leak to some random guy.

Companies are definitely selling your data. Use a VPN.

A VPN will not save you, they are easily worse for privacy in terms of user tracking. It centralises your entire web traffic in a single place for the VPN provider to track (and potentially sell).

You either trust the ISP or a VPN. Its a tool not a blanket of protection. Opsec and knowing how to move is most important.

I’ve always gotten the impression it was mostly intended to be self hosted. I’ve self hosted it for something like a couple years now, runs like a clock. It still strips out tracking and advertising, even if you don’t get the crowd anonymity of a public instance.

Self hosting doesn’t make sense as a privacy feature because then it’s still just you making requests to google/other SE

It’s not useless, it removes a lot of the tracking cookies and such and sponsored links loaded with telemetry. Theoretically you can also get the benefits of anonymity if you proxy through Tor or a VPN, which I originally tried to do but turns out Google at least blocks requests from Tor and at least the VPN endpoint I have and probably most of them. Google or whatever upstream SE can still track you by IP when you self host, but its tracking is going to much less without the extra telemetry cookies and tracking code it gets when you use Google results directly.

But yes, practically you either have to trust the instance you’re using to some extent or give up some of the anonymity. I opted to self host and would recommend the same over using a public instance if you can, personally. And if privacy is your biggest concern, only use upstream search providers that are (or rather, claim to be) more privacy respecting like DDG or Qwant. My main use case is primarily as a better frontend to search without junk sponsored results and privacy is more of a secondary benefit.

FWIW, they have a pretty detailed discussion on why they recommend self-hosting here.

As someone who hosts an instance, news to me lol

Edit: Developer says this can’t be done currently? Reddit comment

It doesn’t bother me one bit of you know my search history. You’ll learn I search a word to see if I know your to spell it properly and that I DIY a lot of stuff lol

Billions of Chinese also aren’t bothered that they live under surveillance, but it isn’t right.

Good thing it only happens to the Chinese…

Of course it can be done, check your web server logs.

If you are using GET requests to send search queries to searxng, what you searched for will show up in the logs as

2024-10-31 123.321.0.100 /?query=kinky+furry+picturesIf you use POST requests the server admin can also easily enable logging those.

People hosting searxng can absolutely see what you searched for, along with your IP address, user agent string etc.

Well my instance’s logs are sent to null for this reason already, but thank you for the info!

Thanks for clarification and great that this is not included in project, but couldn’t someone change the server side code and somehow see more info that goes through?

I know there is that HTML check in https://searx.space/ to see if search interface code is not heavily modified, but on server side anything could go on.

If requests are encrypted in a way that searxng does not see contents then it probably is not trivial to do, but there always is a possibility something clever could be done.

Man, i wish i had the same experiencr

The couple of times I tried it out, the search results where barely accurate

Try kagi. It’s paid at $5/mo., but you get 300 searches to try it out.

4get.ca has been great for me.

It’s ok at best, when it works. When it runs out of API hits for the day at noon, you need to use something like https://searx.neocities.org/ and retype your search multiple times until you manage to hit an instance that can actually perform a search.

Also, no suggestions.

I use this daily and just wanted to highlight two downsides:

-

1 some instances are quite slow in response

-

2 some instances are non English, so everything except search results might be unreadable unless you know that language

The second one has been happening less frequently recently though, not sure if there are just more English instances or some other reason behind it.

-

Self host it, it’s nothing to set up.

Doesn’t that defeat the only benefit - anonymity?

It strips the tracking data to and from the engines, so if you tuck it behind a vpn, GG.

Not if it runs the queries it sends out via a VPN where it mingles with thousands of other requests. An API call doesn’t have the disadvantages of browser fingerprinting, cookies, etc that are used to build a background of a user browsing to your search engine and track their searches. Also, there is no feedback to the search engine about which result you choose to use. If you allow outside users, it would further muddy the waters.

Ideally, you’d have it run random searches when not being used to further obfuscate the source.

btw

I mean it’s often better than nothing, but it is a meta search that still often uses Google or Bing to gather results. IMHO, cut off the need for that data on the whole and use an option like Mojeek

The homepage took 5 seconds to load. I’ll pass.

Not at all for me

not sure what’s happening there for you, speed is one of the things which people frequently say we do well for

Let me tell you about waiting for AskJeeves to load up in the 90s.

Web crawler indeed

You can use Mojeek with SearXNvm with nothing enabled but Mojeek returned no results, I wonders why is that?I could be wrong but didn’t Mojeek also index results from Google and Bing?I’m wrong they index their own results, I mean Qwant is a search engine built in EU and they index their own resultsqwant is bing, mainly

For all their talk of doing things different with their own index and rankings. Mojeek is following exactly what Google did. It’s still an ad based business model that makes users into products to be sold to advertisers. They’re good now, while still trying to build market share. But once their investors get hungry, the enshitification will commence.

we make money mainly from our api, our investors are patient private capital and we don’t take vc, appreciate your point but these are fundamentally different situations, our ads (when they run) will also be contextual so more of a ddg situation than a “makes users into products to be sold to advertisers”

fair enough if it’s not for you though

API index access is an important difference.

If it was only that, without public facing ad driven search, I’d be more impressed.Maybe if you removed the adds, and severely rate limited your own public facing search, so it’s more of a demo than an actual service. This would force you to solely make money off the API access, without directly competing against those customers.

That would be an honest buisness model. One that doesn’t turn users into eyeballs for advertising. Which seems to me, to be the most insidious problem of the modern internet.

Agree to disagree here, but I’ll refer to Cory Doctorow for a contextual vs behavioral/tracking ads comparison, one which is very good: https://pluralistic.net/2020/08/05/behavioral-v-contextual/#contextual-ads (applied to the media, but the general thread is relevant)

Do you have topics that are censored? I searched for my reddit post “what I’ve learnt from the mantis aliens”, and it does not show up in your results. Neither at google’s. But it does on other search engines. The ufo/alien stuff are censored in most search engines, while there isn’t a reason to be. That is how I judge search engines. And Mojeek doesn’t give me the results I asked for.

Reddit doesn’t allow us to crawl: https://www.reddit.com/robots.txt

Is that legally binding? What happens of they catch you, ban your IPs then you’re in the same situation as now. Literally no reason to not do it IMO.

IP already hits a wall, also better to not get a reputation as a bad bot, it’s taken a while to get known for being friendly and respecting rules, to us you should follow robots

I seem to recall creative ways to index things without robots, e.g. browser addon that users opt into to send pages and such, essentially crowdsourcing the indexing. Anyways good to see you’re taking the high road!

I don’t know if the comparison is inaccurate.

You make money from advertising to your users (“ddg situation” notwithstanding), are beholden to your investors (private status notwithstanding) and need to see more users to increase revenue. The person above you is saying that this model is what will drive you to eventually be as bad as Google. Do you understand?

We make money from our API, what they’re referencing is a beta ads programme which was running

deleted by creator

which language are we talking?

Mojeek reminds me of early Google results which only searches title and inurl I like it

It’s pretty nice. The REST API for running searches makes running SearxNG worth it, if nothing else.

Could you elaborate on what you mean by that?

I really wish there was a privacy oriented search engine with decent search results …

At this point, I just wish there was a search engine with decent results …

I’d use it if its public instances didn’t get rate-limited so often

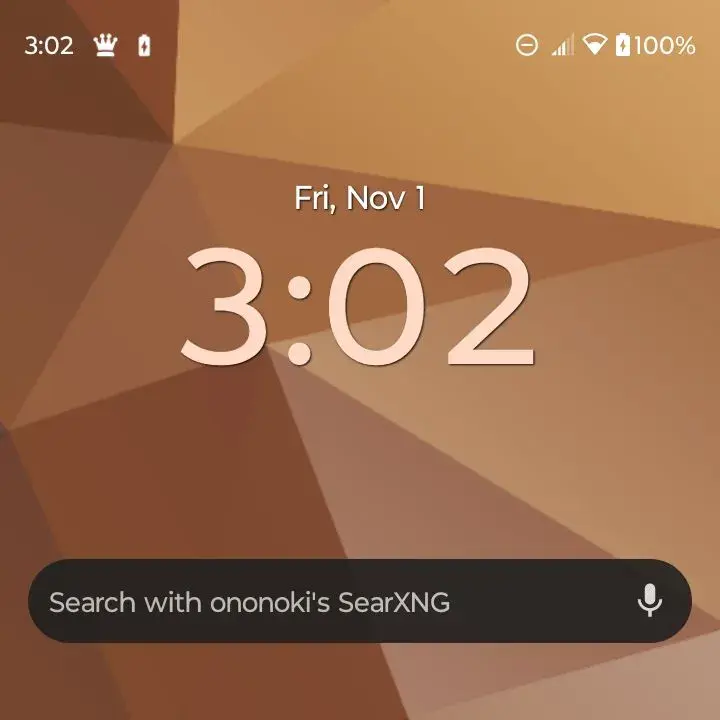

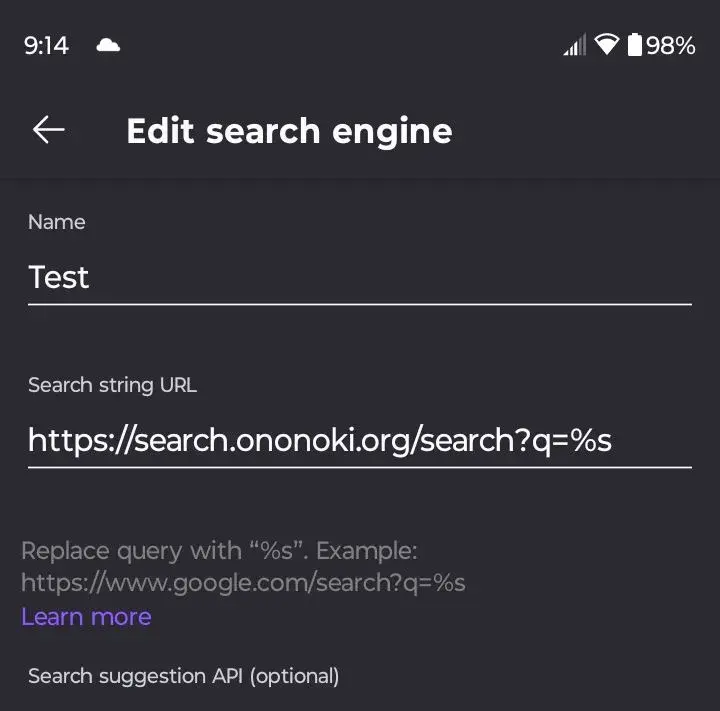

Does it have a widget? I want to integrate it in my workflow on my phone

Yeah, the chrome one will convert.

Oh? I tried on Vanadium and Brave (no way I’m using Chrome) and all I have is the option to “web app” it.

Can you share the steps you used?

I couldn’t figure out a way in Brave, it won’t let you set a custom URL for the default browser. Firefox allowed it to just change the customer browser link in the format of

Couldn’t find an APK to test vanadium, I assume it may be exclusive to GrapheneOS? (I just called it test for this, so name it something you’ll recognize in a few months)

Once the Default browser is changed in Firefox/chrome, their widgets pull that information from the app, so it just searches that way.

Oh, sweet. Thanks so much. It worked in Vanadium.

i get a lot of simpsons pictures in the image results for some rsn

Some raisin

Some resin

same here but with hentai on searx.be