Places GPT-based “AI” next to flying cars

Flying cars exist, they’re just not cost effective. AFAICT there’s no GPT that is proficient at coding yet.

As far as I know, right now the main problem with flying cars is that they are nowhere near as idiot-proof as a normal car, and don’t really solve any transportation problem since most countries’ air regulations agencies would require them to exclusively take off and land in airports… Where you can usually find tons of planes that can go much further (and are much more cost effective, as you pointed out)

It’s a lot easier to access ChatGPT than it is to access a flying car

The more people using chatgpt to generate low quality code they don’t understand, the more job safety and greater salary I get.

Garbage in garbage out is how they all work if you give it a well defined prompt you can get exactly what you want out of it most of the time but if you just say fix this problem it’ll just fix the problem ignoring everything else

You’re holding it wrong

Wait till this guy learns how a theremin work.

While I am not fond of AI, we do have access to it at work and I must admit that it saves some time in some cases. I’m not a developer with decades of experience in a single language, so something I am using AI to is asking “Is it possible to do a one-liner in language X where it does Y?” It works very well and the code is rarely unusable, but it is still up to my judgement whether the AI came up with a clever use of functions that I didn’t know about or whether it crammed stuff into a single unreadable line.

Yep, by definition generative AI gets worse the more specific you get. If you need common templates though, it’s almost as good as today’s google.

… which is not a high bar.

Who are those guys they keep asking this question over and over ? And how are they not able to use such a simple tool to increase their productivity ?

Everyone keeps talking about autocomplete but I’ve used it successfully for comments and documentation.

You can use vs code extensions to generate and update readme and changelog files.

Then if you follow documentation as code you can update your Confluence/whatever by copy pasting.

I also use it a lot for unit tests. It helps a lot when you have to write multiple edge cases, and even find new one at times. Like putting a random int in an enum field (enumField = (myEnum)1000), I didn’t knew you could do that…

Yeah. I’ve found new logic by asking GPT for improvements on my code or suggestions.

I cut the size of a function in half once using a suggested recursive loop and it blew my mind.

Feels like having a peer to do a code review on hand at all times.

Yeah, I also find it super helpful with unit tests, saves a lot of time.

Claude is my coding mentor. Wouldn’t want to work without it.

I run code snippets by three or four LLMs and the consensus is never there. Claude has been the worst for me.

Which one has been best? I’m only a hobbyist, but I’ve found Claude to be my favorite, and the best UI by a mile.

It’s just fancier spell check and boilerplate generator

I’m a penetration tester and it increases my productivity a lot

I mainly use AI for learning new things. It’s amazing at trivial tasks.

as a dental assistant I can also confirm that AI has increased my productivity, checks notes, by a lot.

so it’s a vector of attack?

Penetration tester, huh? Sounds like a fun and reproductive job.

But it can be very HARD sometimes

Judging this article by it’s title (refuse to click). Calling BS. ChatGPT has been a game changer for me personally

To be honest ChatGPT pretty much killed the fun of programming.

What?

I’m fine with searching stack exchange. It’s much more useful. More info, more options, more understanding.

It introduced me to the basics of C# in a way that traditional googling at my previous level of knowledge would’ve made difficult.

I knew what I wanted to do and I didn’t know what was possible or how to ask without my question being closed as a duplicate with a link to an unhelpful post.

In that regard, it’s very helpful. If I had already known the language well enough, I can see it being less helpful.

I learned bash thanks to AI!

For years, all I did was copy and paste bash commands. And I didn’t understand arguments, how to chain things, or how it connects.

You do realize that a very thorough manual is but a

man bashaway? Perhaps it’s not the most accessible source available, but it makes up for that in completeness.I believe accessibility is the part that makes LLMs helpful, when they are given an easy enough task to verify. Being able to ask a thing that resembles a human what you need instead of reading through possibly a textbook worth of documentation to figure out what is available and making it fit what you need is fairly powerful.

If it were actually capable of reasoning, I’d compare it to asking a linguist the origin of a word vs looking it up in a dictionary. I don’t think anyone disagrees that the dictionary would be more likely to be fully accurate, and also I personally would just prefer to ask the person who seemingly knows and, if I have reason to doubt, then go back and double-check.

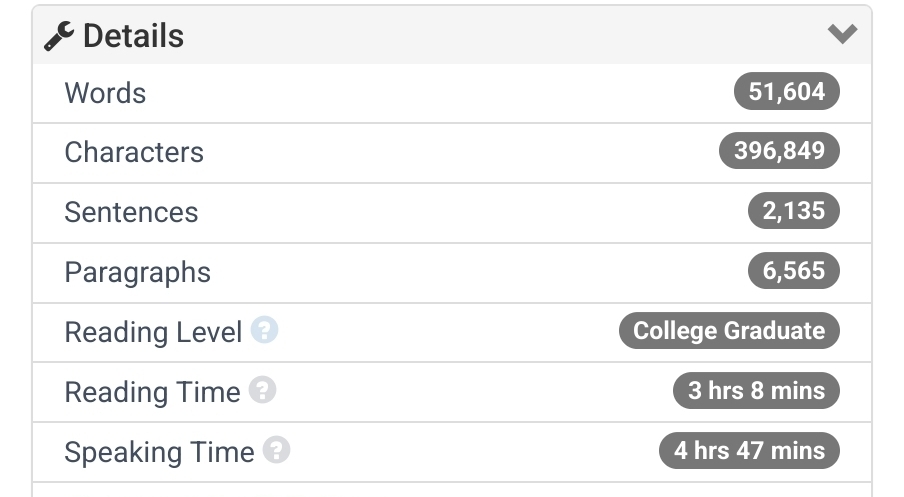

Here’s the manpage for bash’s statistics from wordcounter.net:

Perhaps LLMs can be used to gain some working vocabulary in a subject you aren’t familiar with. I’d say anything more than that is a gamble, since there’s no guarantee that hallucinations have not taken place. Remember, that to spot incorrect info, you need to already be well acquainted with the matter at hand, which is at the polar opposite of just starting to learn the basics.

What about just reading the documentation?

Even with amazing documentation, it can be hard to find the thing you’re looking for if you don’t know the right phrasing or terminology yet. It’s easily the most usable thing I’ve seen come out of “AI”, which makes sense. Using a Language Model to parse language is a very literal application.

The person I replied to was talking about learning the basics of a language… This isn’t about searching for something specific, this is about reading the very basic introduction to a language before trying to Google your way through it. Avoiding the basic documentation is always a bad idea. Replacing it with the LLMed version of the original documentation probably even more so.

Great for Coding 101 in a language I’m rusty with or otherwise unfamiliar.

Absolutely useless when it comes time to optimize a complex series of functions or upgrade to a new version of the .NET library. All the “AI” you need is typically baked into Intellisense or some equivalent anyway. We’ve had code-assist/advice features for over a decade and its always been mid. All that’s changed is the branding.

This is what I’ve used it for and it’s helped me learn, especially because it makes mistakes and I have to get them to work. In my case it was with Terraform and Ansible.

Haha, yeah. It really loves to refactor my code to “fix” bracket list initialization (e.g.

List<string> stringList = [];) because it keeps not remembering that the syntax has been valid for a while.It’s newest favorite hangup is to incessantly suggest null checks without asking if it’s a nullable property that it’s checking first. I think I’m almost at the point where it’s becoming less useful to me.

Every now and then, GitHub Copilot saves me a few seconds suggesting some very basic solution that I am usually in the midst of creating. Is it worth the investment? No, at least not yet. It hasn’t once “beaten” me or offered an improved solution. It (more frequently than not) requires the developer to understand and modify what it proposes for its suggestions to be useful. Is is a useful tool? Sure, just not worth the price yet, and obviously not perfect. But, where I’m working is testing it out, so I’ll keep utilizing it.

The writer has a clear bias and a lack of a technical background (writing for Techies.com doesn’t count) .

You don’t have to look hard to find devs saving time and learning something with AI coding assistants. There are plenty of them in this thread. This is just an opinion piece by someone who read a single study.

if you are already competent and you are aware that it doesn’t necessarily give you correct information, the it is really helpful. I know enough to sense when it is making shit up. Also it is, for some scenarios, faster and easier then looking at a documentation. I like it personally. But it will not replace competent developers anytime soon.

This opinion is a breath of fresh air compared to the rest of tech journalism screaming “AI software engineer” after each new model release.