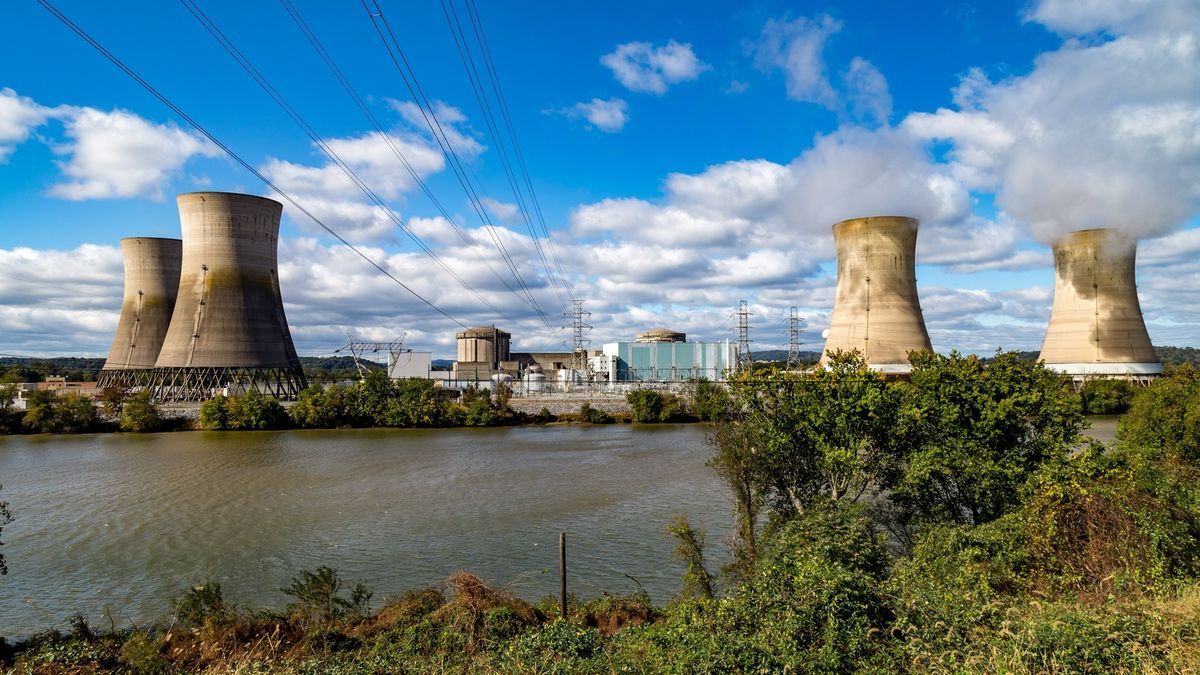

Modern AI data centers consume enormous amounts of power, and it looks like they will get even more power-hungry in the coming years as companies like Google, Microsoft, Meta, and OpenAI strive towards artificial general intelligence (AGI). Oracle has already outlined plans to use nuclear power plants for its 1-gigawatt datacenters. It looks like Microsoft plans to do the same as it just inked a deal to restart a nuclear power plant to feed its data centers, reports Bloomberg.

I am not talking about things like ChatGPT that rely more on raw compute and scaling than some other approaches and are hosted at massive data centers. I actually find their approach wasteful as well. I am talking about some of the open weights models that use a fraction of the resources for similar quality of output. According to some industry experts that will be the way forward anyway as purely making models bigger has limits and is hella expensive.

Another thing to bear in mind is that training a model is more resource intensive than using it, though that’s also been worked on.

You put power in and you get worthless garbage out. Do the world a favor and just mine crypto, try FoldingCoin out.

I’ve seen teachers use this stuff and get actually decent results. I’ve also seen papers where people use LLMs to hack into a computer, which is a damn sophisticated task. So you are either badly informed or just lying. While LLMs aren’t perfect and aren’t a replacement for humans, they are still very much useful. To believe otherwise is folly and shows your personal bias.

Anybody who uses a bullshit generator in any step of the education process is unqualified.