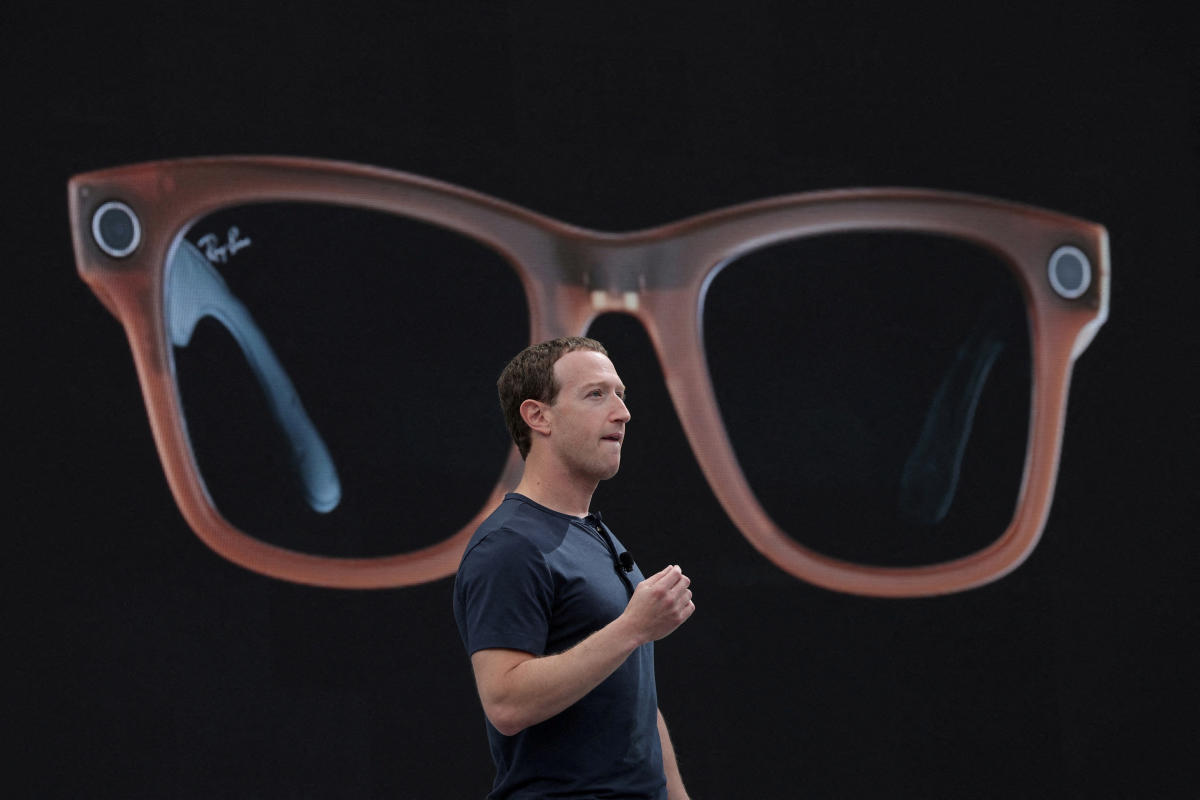

Meta on Tuesday announced the release of Llama 3.1, the latest version of its large language model that the company claims now rivals competitors from OpenAI and Anthropic. The new model comes just three months after Meta launched Llama 3 by integrating it into Meta AI, a chatbot that now lives in Facebook, Messenger, Instagram and WhatsApp and also powers the company’s smart glasses. In the interim, OpenAI and Anthropic already released new versions of their own AI models, a sign that Silicon Valley’s AI arms race isn’t slowing down any time soon.

Meta said that the new model, called Llama 3.1 405B, is the first openly available model that can compete against rivals in general knowledge, math skills and translating across multiple languages. The model was trained on more than 16,000 NVIDIA H100 GPUs, currently the fastest available chips that cost roughly $25,000 each, and can beat rivals on over 150 benchmarks, Meta claimed.

IMO the more interesting models are 70B and 8B, aka the first models you can host yourself and (for basically the first time) the first open models distilled from such a large “parent” model.

But the release is a total dud among testers because they’re bugged with llama.cpp, lol.

I’ve got llama 3.1 8b running locally in open webui. What do you mean it’s bugged with llama.cpp?

Does anyone know what it takes to run 70b?

Seems like min 32gb RAM and 4070?

I mean I have a 24GB GPU, and its almost too slow for me. If someone makes an AQLM I may run it some.

You were able to load 70b just into GPU?

Yeah, an AQLM 70B will fit in 24GB with short context, and decent quality.

You never hear about it, mostly because it’s so hard to quantize in the first place, but also because it’s not a GGUF so most people ignore the format, lol.

llama.cpp, the underlying engine, doesn’t support extended RoPE yet. Basically this means long context doesnt work and short context could be messed up too.

I am also hearing rumblings of a messed up chat template?

Basically with any LLM in any UI that uses a GGUF, you have to be very careful of bugs you wouldn’t get in the huggingface-based backends. A lot of models run without errors, but not quite right.

I wouldn’t call it a “dud” on that basis. Lots of models come out with lagging support on the various inference engines, it’s a fast-movibg field.